Dataset scores vs. decision confidence

We have spent years improving data quality. But in AI-enabled, real-time data ecosystems, one question matters more than the score on a table: did the decision turn out right?

A dataset can pass every validation check and still lead to the wrong outcome.

That is the gap Decision Quality addresses. It does not replace data quality. It extends it from static validation into decision confidence, traceability, model influence, and outcome validation.

Infographic

Visual framework

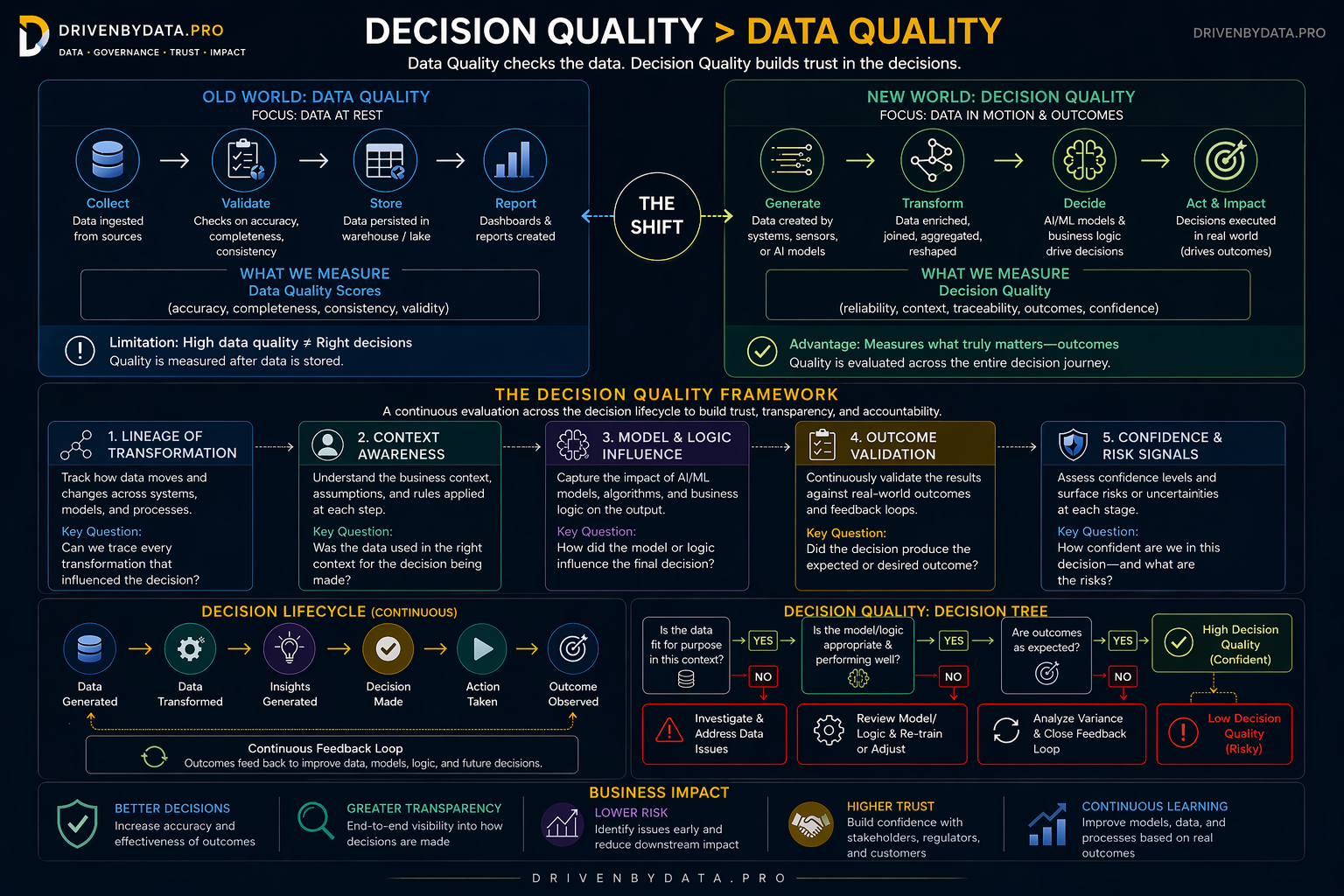

Full diagram: shift from data at rest to data in motion, pillars, lifecycle, decision tree, and business impact.

On narrow screens, swipe sideways to read the full framework or open the full-size graphic.

At rest vs. end-to-end

The shift

Data Quality

Validates data after ingestion. Measures accuracy, completeness, consistency, and validity once the data is stored.

Decision Quality

Evaluates the full decision path: how data was generated, transformed, interpreted, acted on, and whether the outcome was reliable.

Operational model

The Decision Quality framework

The framework has five practical pillars that help teams move from static scoring to dynamic confidence.

Lineage of Transformation

Track how data moves and changes across systems, models, and processes.

Context Awareness

Understand the business meaning, assumptions, and rules used at each step.

Model & Logic Influence

Capture how AI models, algorithms, and business logic influence the output.

Outcome Validation

Compare decisions against real-world results and close feedback loops.

Confidence & Risk Signals

Surface uncertainty, exceptions, and decision risk before impact spreads.

Branching checks

Decision tree

A simple way to operationalize Decision Quality is to evaluate each decision path through a few branching questions.

Is the data fit for purpose in this business context?

Is the model, logic, or rule performing as expected?

Are the observed outcomes aligned with expectations?

High decision confidence. Continue monitoring and learning.

Investigate data quality, transformation lineage, model logic, or business assumptions.

Review model rules, retrain if needed, or adjust the decision logic.

Analyze variance and close the feedback loop.

Low decision confidence. Treat as a risk signal, not just a data defect.

Governance · engineering · AI · business

Why it matters

Decision Quality creates a bridge between governance, data engineering, AI systems, and business outcomes. It pushes teams to measure not only whether data is clean, but whether the decision path is transparent, explainable, and reliable.

We don’t just need better data quality. We need better ways to trust the decisions built on top of it.

About the author

I help organizations turn governance from a policy layer into an operating model—connecting data quality, metadata, stewardship, platform architecture, and trusted consumption across modern cloud ecosystems.

My work has consistently focused on the point where business trust breaks down: not only in bad source data, but in weak transformation controls, disconnected metadata, and ungoverned decision pipelines.